The Human in the Loop

A Case Study in Captcha Farms, Residential Proxies, and the Economics of Form Spam

Introduction: When the Captcha Works Perfectly

The owner of a wedding photography site came to me with a familiar complaint: his website contact form was drowning in spam. Ten to thirteen garbage submissions per day, each one just plausible enough to waste time investigating before being discarded.

(For privacy, I’ll call the location “Coral Island” throughout this case study. The actual Caribbean destination has been anonymized.)

What made it frustrating wasn’t the volume—it was the futility. He’d already done the right things. His form had captcha protection, he’d installed anti-spam plugins, he’d even built a keyword blacklist targeting phrases that kept appearing in the junk submissions. None of it helped.

The spam kept coming…generic messages like “Please contact me” and “I want to know more”; nonsense in the venue field—company names, random words, once just “Male”; questions that had nothing to do with wedding photography: “Can medical insurance reimburse treatment costs?”

And the emails: real email addresses, harvested from data breaches, attached to fake submissions. When his form tried to send confirmation messages, they’d bounce back or, worse, reach confused strangers who’d reply asking why a Caribbean wedding photographer was contacting them.

Something was wrong. Either his captcha was broken, or he was dealing with something more sophisticated than the usual bot traffic. I suspected the former…but I was wrong.

Part One: The Initial Theory

Following the Code

My first hypothesis seemed reasonable: somewhere in the form’s code, the captcha validation was failing silently. Perhaps submissions were getting through without completing the puzzle at all.

I started where I usually start—examining the page source. The form was built with a popular WordPress plugin, and what I found looked like a mess. The JavaScript initialization code was loading twice, the form’s honeypot field (a hidden trap designed to catch bots that fill every field they find) was duplicated in the HTML.

This pointed toward a caching conflict or plugin interference. If the captcha’s JavaScript was getting clobbered by duplicate execution, maybe the verification token wasn’t attaching to submissions properly. And if the server wasn’t enforcing captcha validation strictly, spam could sail through unchallenged.

It was a tidy theory. It explained the symptoms and it suggested a clear fix.

It was also…completely wrong.

The Test That Changed Everything

Before recommending any changes, I wanted to confirm the vulnerability. If submissions were bypassing captcha validation, I should be able to replicate it.

I crafted a test submission using curl—a command-line tool that lets you send HTTP requests directly, bypassing the browser entirely. No JavaScript, no captcha widget, no puzzle to solve. Just raw form data sent straight to the server.

{"success":false,"data":{"message":"hCaptcha verification failed. Please try again."}}

The server rejected it. No captcha token, no submission accepted.

I tried variations: different form fields, different payloads, fresh authentication tokens…but every submission without a valid captcha solution was refused. The validation was working exactly as designed.

Which meant the spam wasn’t bypassing the captcha. The spam was solving it.

Part Two: Understanding the Adversary

The Uncomfortable Truth About Captchas

Here’s something most people don’t realize about captcha protection: it’s not a wall; it’s more like a toll booth.

Services exist (2captcha, anti-captcha, and dozens of others) where you can pay to have captchas solved at scale. The going rate is roughly two to three dollars per thousand solves. Submit a puzzle, wait a few seconds, receive the solution. At that price point, captcha protection becomes a minor line item in a spam operation’s budget.

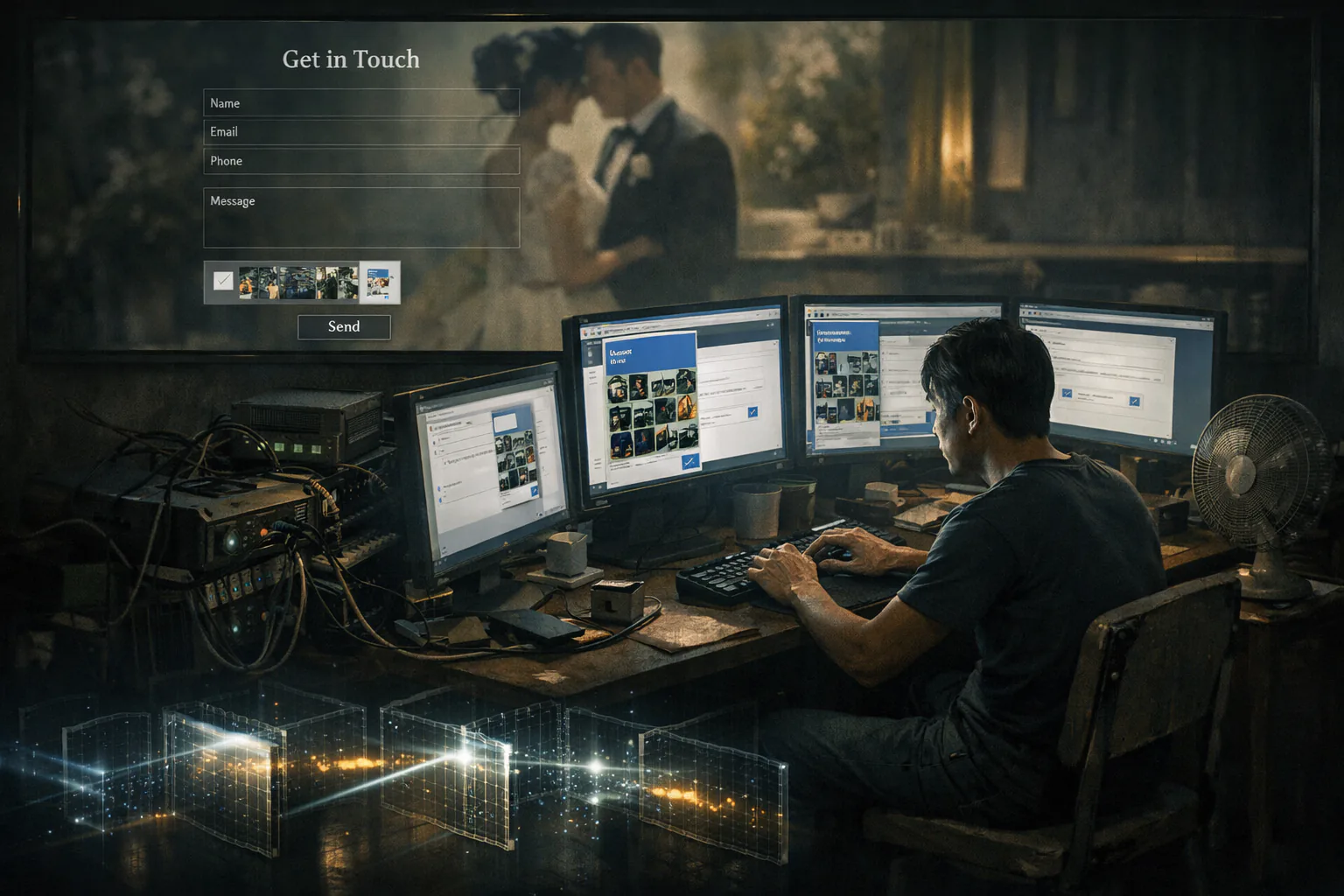

The workers doing the solving are real humans, typically in developing countries, earning fractions of pennies per puzzle. It’s a grim little economy: your “I’m not a robot” checkbox is being clicked by someone in Bangladesh or Venezuela making less than a dollar an hour to prove to websites that a spammer in Eastern Europe is definitely a real person who wants to know about wedding photography packages.

But the captcha farms were only half the picture.

Residential Proxies: The Perfect Disguise

When I traced the IP addresses from the spam submissions, I expected to find the usual suspects: overseas data centers, known bot networks, cheap VPS providers with terrible reputations. Security services have been flagging these for years. They’re easy to block.

Instead, I found Comcast, AT&T, Verizon, Cox, T-Mobile.

Every single IP address traced back to a major American residential internet provider. These weren’t server farms or bot networks. They were home internet connections—real people’s home internet connections!

This is what a “residential proxy network” looks like in practice. Somewhere, someone downloaded a free VPN app or browser extension that promised to protect their privacy. What the fine print didn’t emphasize was that in exchange for free service, they were selling access to that user’s internet connection. The spammer’s traffic gets routed through a grandmother’s Comcast connection in Ohio, and to the rest of the internet, it looks completely legitimate.

It’s a parasitic ecosystem. The VPN companies get revenue. The spammers get clean IP addresses. The only losers are the unwitting users whose connections become laundry services for fraud, and, of course, the businesses being targeted.

The Economics of Indifference

Understanding this helped me understand the attack. This wasn’t targeted. Nobody was specifically trying to harm a Caribbean wedding photographer. This was industrial-scale spray-and-pray: hit thousands of contact forms across thousands of websites, harvest whatever data comes back, move on.

The spammers don’t care about individual targets. They’re running volume operations; a few dollars for captcha solving, access to residential proxy networks, a template message that vaguely fits most contact forms. They probably don’t even remember this site exists…

…Which, counterintuitively, was the key to stopping them.

Part Three: The Pattern in the Noise

Mining the Submission Data

With the technical theory debunked, I shifted to analyzing what the attackers were actually submitting. If I couldn’t block them at the gate, maybe I could identify them by their behavior.

I pulled the form submission data and started looking for patterns that distinguished spam from legitimate inquiries. It didn’t take long.

Destination weddings require planning. Real couples inquiring about photography on Coral Island are booking months in advance—coordinating flights, venues, guest travel. Of the 135 submissions I analyzed, legitimate inquiries had event dates weeks or months in the future.

The spam told a different story. Thirty-four percent requested same-day events. Fifty-nine percent requested dates within one week. Two submissions had dates in the past—a nice confirmation that bots were bypassing the form’s date picker entirely and submitting directly to the server.

Nobody books a destination wedding photographer with one week’s notice. Nobody.

The venue field was even more telling. Real couples mentioned specific Coral Island locations: particular resorts, beaches, landmarks. Spam submissions contained company names, random words, or blank entries: “CACI International”, “Kibo Commerce”, “Male”.

And the messages themselves: generic templates designed to fit any contact form… “Please contact me”, “I want to know more”, “My colleague tried it and felt good, so he recommended it to me”. That last one appeared verbatim in multiple submissions…the unmistakable signature of a reused template.

The Defense Strategy

The goal wasn’t to make spam impossible. Against an adversary with captcha farms and residential proxies, “impossible” isn’t realistic. The goal was to make this particular form not worth the effort.

These operations run on volume. They’re processing thousands of sites simultaneously, using templates and automation. They don’t have time to research individual targets; they fill forms quickly and move on to the next one.

Anything that requires site-specific knowledge breaks their model.

I therefore implemented three layers:

Date validation: Server-side rejection of any submission where the event date was in the past or within seven days. Based on the data, this alone would catch 59% of spam with zero false positives.

Contextual validation: A new required field asking “On what island will this event be taking place?” with validation requiring the answer to contain the island’s name. Real couples answer this without thinking. Spam operators running templates across thousands of sites don’t take time to understand each one; they fill fields quickly and move on. A question requiring actual knowledge of the business disrupts that workflow.

Custom honeypot: During testing, I discovered a bug in the form plugin’s built-in honeypot. Due to how the plugin handled multi-page forms, the honeypot field was rendering twice in the HTML. An automated script could fill the first instance and leave the second blank, bypassing the check entirely. I implemented a custom server-side honeypot independent of the plugin’s flawed version.

The combined approach targeted the economics of the operation, not its technical capabilities. The attackers could adapt (read the site, figure out the island question, use realistic dates), but doing so would require individual attention to each target, which destroys the entire value proposition of mass spam operations.

Part Four: The Implementation

When Code Doesn’t Work Like It Should

Server-side validation sounds straightforward until you’re actually implementing it.

The date validation worked immediately—or so I thought. Initial testing showed it correctly rejecting submissions with dates too soon or in the past. Then I noticed that submissions on the 13th of the month were being rejected when they shouldn’t have been.

The culprit was date format parsing. The form submitted dates as “01-25-2026” (MM-DD-YYYY, American format), but PHP’s default date parser interpreted the first number as the day. “01-25-2026” became “day 1, month 25”—invalid. Any date after the 12th failed because there’s no 13th month.

A one-line fix: explicitly specifying the date format rather than letting PHP guess. The kind of bug that’s obvious in retrospect and invisible until it ruins someone’s day.

The timezone validation I’d planned as a reserve measure proved more difficult. The idea was elegant: capture the browser’s timezone setting and flag mismatches with the apparent IP location. A submission appearing to come from Ohio but reporting “Asia/Manila” timezone would be suspicious. (That’s how I primarily solved another form spam submission case).

Unfortunately, the form plugin’s architecture didn’t cooperate. Its form submission process serialized data before any JavaScript event listeners could intercept it. I tried multiple approaches—query parameters, AJAX interception, form event listeners with capture phase. None worked. The plugin was too opinionated about how data flowed.

I shelved timezone validation and noted that if we needed it in the future, switching to a different form solution would be more practical than fighting the current one.

Cleaning House

While implementing the fixes, I addressed the secondary issues I’d found during reconnaissance.

Three anti-spam plugins were installed but ineffective. One had its settings page completely broken—it wouldn’t even load. Another was configured with the slowest blocking method and had its main protection features disabled; it was also the likely cause of the duplicate honeypot field I’d found. The third had a keyword blacklist the client had thoughtfully configured with common spam phrases, but its integration with the form plugin was broken—I tested it by submitting a form containing a blacklisted phrase, and it went through without being blocked. Zero hits on any keyword despite weeks of spam.

All three were removed. The actual protection came from the captcha (which was working) and the new contextual validation. The plugins were just adding complexity and potential conflicts.

Part Five: The Aftermath

A Week of Silence

The changes went live on a Friday. By the following Monday, the client reported zero spam submissions over the weekend. Legitimate inquiries continued arriving normally—complete with specific Coral Island venues, realistic dates, and actual questions about photography packages.

A week later, still nothing. Whatever operation had been targeting the form had apparently moved on to easier prey, exactly as predicted.

The Email Mystery

Then a different problem surfaced. The client noticed that two legitimate form submissions hadn’t triggered the expected email notification. The inquiries were recorded in the form plugin’s database, but no email arrived.

This wasn’t related to anything we’d implemented; the email system hadn’t been touched, but it required investigation.

The site used an SMTP plugin connected to Gmail’s API for sending. I sent test emails through the plugin’s interface: they arrived fine. I checked the server’s PHP error logs around the time of the missed submissions: nothing from the form plugin or email system. The Gmail API connection bypasses the server’s mail system entirely, so there were no local mail logs to examine.

The two affected submissions had nothing obviously unusual about them. One had an IPv6 address, but that shouldn’t matter for email sending.

Most likely culprit: a transient Gmail API hiccup. Token refresh timing, rate limiting, brief network interruption, any of dozens of things that could cause a single request to fail without leaving evidence.

The solution was logging. I recommended installing an email logging plugin to capture every send attempt and its result. If it happened again, we’d have data instead of speculation.

It’s an unsexy recommendation—“install a plugin that records things”—but it’s the difference between investigating a problem and guessing at one. The client’s contact form was now protected against spam, but email delivery depends on services outside our control. When things fail silently, logs are how you find out why.

Lessons Learned

For Business Owners

Captchas are not security. They’re speed bumps. Anyone willing to pay two dollars per thousand solves can drive right through. If you’re facing sophisticated spam, captcha alone won’t save you.

Your IP logs might be useless. Residential proxy networks mean spam can arrive from IP addresses indistinguishable from legitimate customers. Blocking by IP is increasingly futile.

Contextual friction beats technical barriers. A simple question that requires knowledge of your business (something a real customer would know immediately but a mass spam operation would need to research) can be more effective than any technical countermeasure.

Plugin sprawl creates false confidence. Three anti-spam plugins were installed and providing zero protection. Complexity isn’t security. Verify that your tools actually work.

For Security Practitioners

Test your hypotheses before acting on them. I was ready to recommend architectural changes based on a theory about JavaScript clobbering. A five-minute curl test proved the theory wrong. The time spent testing saved far more time than it cost.

Understand the adversary’s economics. Technical countermeasures that raise costs or require individual attention are more effective than trying to build impenetrable walls. Mass operations optimize for volume; anything requiring per-target customization disrupts that optimization.

Read the data before designing solutions. The date pattern that identified 59% of spam with zero false positives came from analyzing actual submissions, not from guessing at what spam might look like.

Watch for plugin bugs in edge cases. The duplicate honeypot field was only visible in multi-page form rendering—something you wouldn’t catch in basic testing. When standard security controls aren’t working, dig into whether the implementation is correct.

Log everything. When the email notifications failed, we had nothing to investigate because nothing was being recorded. Silent failures are worse than loud ones. The fix is almost always “capture more data.”

Conclusion

Somewhere, someone is still sitting at a computer, solving captcha puzzles for fractions of a penny, not knowing or caring that one of their solves would have gone to a Coral Island wedding photography site if we hadn’t made it not worth the effort.

The residential proxy networks are still operating, routing spam through grandmother’s Comcast connection, turning unsuspecting free VPN users into unwitting accomplices in operations they’d be horrified to know about.

And the spam operations themselves continue, hitting thousands of other contact forms that haven’t been hardened, harvesting data, wasting people’s time.

We didn’t stop them…we just made this one form not worth the trouble. They moved on. That’s the unglamorous reality of defending against mass operations: you can’t shut them down, but you can make yourself a poor target. When the cost of attacking you exceeds the expected return, economics does the rest.

The photographer’s inbox is quiet now. Legitimate inquiries arrive with specific dates, specific venues, and the island’s name somewhere in the details. The spam went elsewhere, looking for forms that haven’t learned to ask the right questions.

Case study prepared by Stonegate Web Security. Details have been anonymized to protect client confidentiality.

Related Reading

-

The Phantom Leads: A Case Study in Click Fraud

When blocking doesn't block and your ad budget disappears. -

The Anatomy of an E-Commerce Breach

Payment skimmers, persistent access, and digital forensics. -

Think You're Too Small to Get Hacked? Think Again.

Mass operations don't care about your size—just your vulnerabilities.