Click Fraud & Lead Form Spam Investigation

Tracing a Manila Click Farm Through Timezone Leaks and a Single Reused Tracking ID

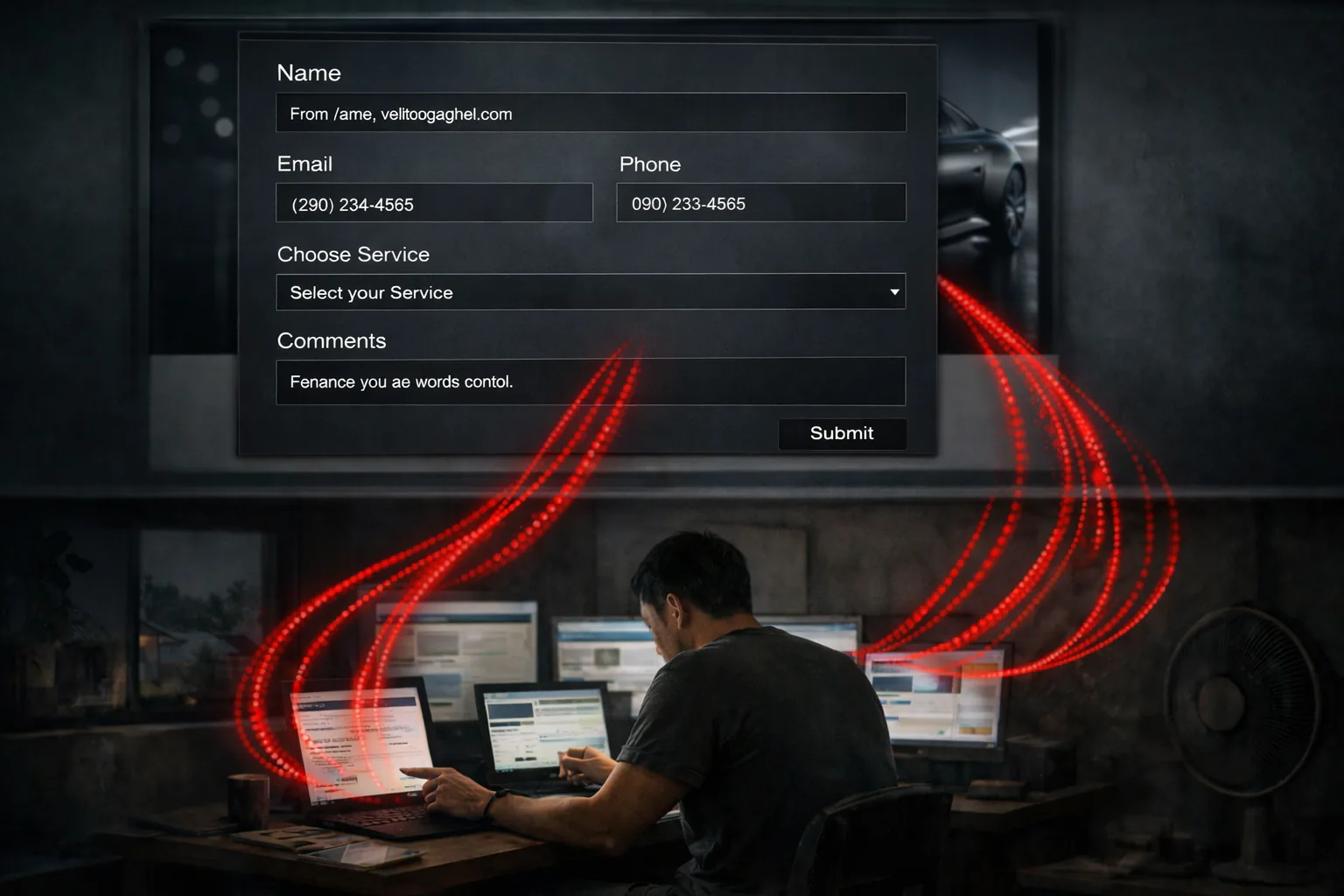

Introduction: When Blocking Doesn’t Block

The client had done everything right—or so it seemed. After weeks of fake form submissions flooding his ceramic coating business, he had compiled a blocklist of 95 IP addresses in Cloudflare. He set up a WAF rule to block them. He waited for the spam to stop.

It didn’t.

Five to six fraudulent submissions continued arriving daily, each one formatted just realistically enough to waste time investigating. Pennsylvania phone numbers. Common American names. Vehicle details that matched his service area. The only tell was the timing—they arrived like clockwork around 9 AM, but 9 AM in a timezone twelve hours ahead of his.

When he checked his Cloudflare analytics, something strange stood out: his carefully crafted blocklist had logged exactly zero blocked requests. Ninety-five IP addresses. Zero hits. Not a single block in twenty-four hours despite ongoing submissions.

Something was fundamentally broken, but it wasn’t what he thought.

Part One: The Invisible Shield

Understanding the Architecture

The client’s business ran on two separate web properties. His main WordPress site lived on his primary domain, proxied through Cloudflare with all security features active. But his lead generation happened elsewhere—on a landing page hosted through GoHighLevel, a marketing platform popular with service businesses.

This landing page lived on a subdomain: a CNAME record pointing to GoHighLevel’s infrastructure. And here was the problem hiding in plain sight.

When you set up a third-party service like GoHighLevel, the setup instructions tell you to configure your DNS record as “DNS only”—the gray cloud icon in Cloudflare’s dashboard. This isn’t optional. These platforms need the traffic to flow directly to their servers so they can issue SSL certificates, track analytics, and handle routing internally.

The client followed these instructions correctly. His landing page worked perfectly. What nobody explained was that by setting DNS to “gray cloud,” he had placed his most important lead capture form entirely outside Cloudflare’s protection perimeter.

Every WAF rule he created? Bypassed. Every IP he blocked? Ignored. Bot Fight Mode, rate limiting, geo-blocking—none of it applied. Traffic went directly from visitors to GoHighLevel’s servers, never touching Cloudflare’s security layer.

The 95-IP blocklist wasn’t failing. It had never been activated in the first place.

The Perimeter Problem

This isn’t a misconfiguration. It’s a fundamental limitation that catches countless small businesses.

When you proxy traffic through Cloudflare (orange cloud), your visitors connect to Cloudflare first. Cloudflare inspects the request, applies your security rules, and if everything passes, forwards the request to your actual server. This is where all the protection happens—at the edge, before traffic reaches you.

When you use DNS-only mode (gray cloud), Cloudflare only handles DNS resolution, pointing browsers to your server’s IP address. The actual web traffic bypasses Cloudflare entirely. No inspection, no rules, no protection.

For SaaS platforms that host your content on their infrastructure (landing pages, marketing funnels, booking systems) DNS-only mode is usually required. You’re not hosting the content yourself, so there’s nothing for Cloudflare to proxy to. The platform handles everything.

This creates a blind spot. Business owners see all their domains in Cloudflare’s dashboard and assume they’re all protected. They configure security rules, add IP blocklists, and feel secure. Meanwhile, their most critical conversion points—the forms that capture leads and revenue—sit completely outside that protection.

The client’s main website was protected. His money-making form was not.

Part Two: The Attack Pattern

Beyond Simple Spam

With the infrastructure problem identified, I turned to the form submissions themselves. I exported the client’s lead data: 102 total entries, spanning about a month.

What I found wasn’t typical bot spam. This was something more deliberate.

Of those 102 submissions, 85 shared an identical browser timezone: Asia/Manila. Every single fake lead reported GMT+08:00. The remaining 17 showed America/New_York—the client’s actual service area. The real leads and the fake leads were easily distinguishable by a single data point the attacker didn’t think to hide.

But the real revelation came from the Google Ads tracking data.

One Click, Eighty-Five Leads

Every Google Ads click generates a unique identifier called a GCLID—a tracking parameter that follows the visitor through the funnel and connects their actions back to the specific ad they clicked. It’s how Google knows which campaigns are working.

Across all 85 fraudulent submissions, I found exactly one GCLID. The same click ID appeared eighty-five times.

This was click fraud, but not the kind most people imagine. The attacker hadn’t clicked the ad eighty-five times to drain the client’s ad budget. They clicked once, captured the URL complete with its tracking parameter, and then submitted fake leads using that same URL over and over.

The client paid for one ad click — maybe two or three dollars. Minimal direct damage. But each fake form submission could register as a conversion. Google Ads can be configured to count every conversion from a click, not just the first one. If the client’s campaign used that setting, Google’s systems would see 85 conversions attributed to a single click — an impossible ratio that wouldn’t just look suspicious to a human reviewer, but would actively mislead the algorithm.

This wasn’t about draining ad spend. It was about poisoning the data that controlled where ad spend went.

Ironically though, the copy/paste shortcut was also a mistake. A more sophisticated operation would have generated fresh clicks—and fresh GCLIDs—for each submission batch. By reusing a single tracking ID eighty-five times, the attacker created an obvious forensic fingerprint and limited the attack’s effectiveness.

There’s a practical explanation for the reuse: the client’s ads were geo-targeted to a specific Pennsylvania service area, and consumer VPN exit nodes rarely geolocate to suburban Philadelphia. The attacker likely couldn’t get the ad to appear reliably — or was simply handed the URL by whoever hired them. Either way, they grabbed a working link once and ran with it. The reuse made operational sense, but it created a glaring evidence trail that a more careful operation would have avoided.

The Submissions Themselves

The fake leads followed a pattern that suggested semi-automated generation with human oversight. Names came from a rotating wordlist—Jessica Lambert, Hannah Fitzgerald, and Grace Sullivan each appeared multiple times. Email addresses followed a formula: first initial, last name, optional numbers, @ a consumer email provider.

Phone numbers showed more craft. They used legitimate Pennsylvania area codes—267, 215, 484, 610—matching the client’s service area. Vehicle information pulled from common makes and models. Someone had put thought into making these submissions look plausible at first glance.

But they hadn’t been careful enough. The names repeated. The emails were too formulaic. And they didn’t bother hiding their timezone.

Part Three: Tracing the Infrastructure

Following the IPs

The form data included IP addresses for each submission. I extracted the unique IPs from the Manila-timezone entries and ran them through ownership lookups.

Twenty-four distinct IP addresses emerged, tracing back to a familiar pattern in the fraud ecosystem:

Several addresses belonged to M247 and Datacamp Limited—infrastructure providers that host consumer VPN services including Mullvad, Proton VPN, and Surfshark. Others pointed to datacenter hosting companies in Seattle. One IP traced to HostRoyale Technologies, a provider commonly used for VPN endpoints.

And then there was the slip-up.

One IP address traced directly to a Philippines ISP. Not a VPN. Not a proxy. Someone’s actual internet connection in Manila.

The attacker had forgotten to enable their VPN for at least one submission, leaking their real location.

Why VPNs Weren’t Enough

Consumer VPNs do exactly one thing: they change your apparent IP address. Traffic that would normally show a Manila origin instead appears to come from Atlanta, Seattle, or wherever the VPN provider operates exit nodes.

This can be enough to fool geo-targeting under the right circumstances. The client’s Google Ads campaign was correctly configured (according to him, but not directly validated by me as I didn’t have direct access to his Google Ads campaign) to show ads only to “people in your targeted locations”—the strict setting, targeting his Pennsylvania service area. But if the attacker connected through a VPN exit node that happened to geolocate near that region, Google would see a local IP address and serve the ad.

However, VPNs don’t change everything. They can’t reach into your operating system and modify your clock settings.

When a web page asks your browser what timezone you’re in—using a JavaScript call to the Internationalization API—the browser reports what your operating system tells it. The attacker’s VPN made them appear to be in the USA. Their browser still reported that their computer’s clock was set to Asia/Manila.

This was the fingerprint they didn’t think to hide.

Building the Attacker Profile

The evidence painted a clear picture. A human operator physically located in Manila, working during local business hours, using consumer VPN services to appear US-based. The consistent timing around 9 AM Manila time suggested a regular work shift. The spacing of submissions—spread over hours rather than arriving in milliseconds—indicated human pacing, not pure automation.

This was likely a click farm worker, the kind you can hire for $50-200 per month through various online services. Someone was paying for this.

The most probable motive: competitor sabotage; a local competitor who wanted to disrupt the client’s business, waste his time, and corrupt his advertising optimization.

Part Four: The Damage Assessment

Beyond Wasted Time

The obvious damage was the wasted hours chasing fake leads—calling bogus phone numbers, responding to fabricated emails, trying to schedule appointments with people who didn’t exist.

But the deeper damage was algorithmic.

Google Ads runs on machine learning. When you enable Smart Bidding—automated systems that decide how much to pay for each ad impression—Google learns from your conversion data. It observes which clicks turn into form submissions, identifies patterns in those successful visitors, and then allocates your budget toward finding more people like them.

Depending on how the campaign’s conversion tracking was configured, some or all of those fake submissions could have registered as conversions—teaching Google’s algorithm exactly the wrong lessons. It would learn to favor visitors matching the fraud profile: datacenter IPs, unusual browser configurations, traffic patterns that looked like the attacker.

The client had noticed the symptoms before understanding the cause. His real leads had dropped precipitously. “No leads in ten days,” he told me, “when I usually see three to five per day.” The campaign was still running. Money was still being spent. But the ads were likely being shown to the wrong people—users who matched the patterns of the fraudulent submissions.

Smart Bidding relies on clean data. Poison the data, and you poison the optimization. The attacker may have only cost a few dollars in direct ad spend, but they had potentially corrupted weeks or months of machine learning.

The Recovery Path

Undoing algorithmic damage takes time. Google’s systems work on rolling windows of data, typically thirty days. The poisoned conversion history would need to age out while new, legitimate data flowed in.

In the meantime, the client had options: file an invalid click report with Google using the GCLID evidence, consider temporarily switching from conversion-based bidding to manual CPC while the data cleaned up, and most importantly—stop the fake submissions from continuing to corrupt the funnel.

Part Five: The Solution

What Wouldn’t Work

Traditional defenses were off the table.

IP blocking was futile. The attacker rotated through VPN addresses constantly. Blocking specific IPs was playing whack-a-mole against an infinite supply of new addresses.

Geo-blocking by IP wouldn’t help either. The VPN exit nodes registered as US traffic.

CAPTCHA alone wouldn’t stop them—and we had proof. The form already had reCAPTCHA v2, the image-based challenge that asks users to identify traffic lights, crosswalks, motor vehicles, etc. Submissions kept coming anyway. This was human-driven fraud; click farm workers solve CAPTCHAs for a living. Adding another challenge would slow down real customers while barely inconveniencing the attacker.

Cloudflare protection was architecturally impossible. The form lived on GoHighLevel’s infrastructure. Traffic never touched Cloudflare. No edge-layer solution could apply.

The Kill Shot: Timezone Validation

If VPNs mask the IP but not the browser environment, the solution was to validate the browser environment directly.

I built a JavaScript validation script that runs inside the form itself, checking three signals before allowing submission.

First, the timezone name. The browser’s Internationalization API reports the operating system’s configured timezone—America/New_York, America/Chicago, and so on. If the timezone isn’t in the list of US zones, that’s a strike.

Second, the UTC offset. This is the raw number of minutes between the user’s local time and UTC. US offsets typically range from 240 to 600 minutes, depending on the timezone and daylight saving status. Manila is always -480 (eight hours ahead). If the offset doesn’t match plausible US values, that’s another strike.

Third, daylight saving time behavior. Most US timezones shift their offset between winter and summer. New York moves from 300 minutes in January to 240 minutes in July. But some US timezones don’t change—Arizona and Hawaii stay constant year-round. And Manila never changes.

The script compares January and July offsets for the user’s reported timezone. If someone claims to be in New York but shows identical offsets in winter and summer, something’s wrong. That’s a third strike.

Any two of these signals failing triggers a block. The submit button disables. A neutral message appears: “We couldn’t submit this request from your current browser configuration. Please contact us by phone if you need help.”

The message is deliberately vague. The attacker shouldn’t know exactly what triggered the block or how to evade it.

Why This Works

To bypass this validation, the attacker would need to change their actual operating system timezone for each submission—not just their VPN location, but their computer’s clock settings. This kills the efficiency that makes click fraud economically viable. A worker who could previously submit dozens of fake leads per hour would now need to change system settings, potentially restart their browser, and manage the cognitive overhead of tracking fake timezones.

More importantly, they might not even know what’s blocking them. The form just stops working. No error message explains the timezone check. No CAPTCHA announces its presence. The submit button simply won’t submit.

The 85 fake submissions in the dataset had one thing in common: Asia/Manila timezone. Every single one would fail the new validation. Meanwhile, legitimate customers in Pennsylvania, Ohio, New York—anyone in a US timezone—would pass without ever knowing the check existed.

Part Six: The Bigger Picture

A Common Blind Spot

This engagement revealed a pattern I’ve seen repeatedly in small business security.

Business owners sign up for Cloudflare, see the orange shield icon, and feel protected. They don’t realize that protection applies only to traffic that passes through Cloudflare’s infrastructure. When their most important forms live on third-party platforms—GoHighLevel, ClickFunnels, HubSpot, any SaaS tool with its own hosting—those forms operate outside the protection perimeter.

This isn’t Cloudflare’s fault or the SaaS platform’s fault. It’s a natural consequence of how modern web architecture works. But the security implications aren’t obvious, and the tools don’t warn you.

The client had spent weeks adding IPs to a blocklist that could never work. He wasn’t doing anything wrong—he just didn’t have visibility into where his traffic actually flowed.

The Lesson for Small Businesses

If your critical forms live on third-party platforms, Cloudflare can’t protect them. Your WAF rules, bot protection, and geo-blocking don’t apply. You need security at the application layer—validation that runs inside the form itself.

This isn’t ideal. Application-layer security is harder to manage, easier to bypass, and requires technical implementation rather than point-and-click configuration. But when your conversion funnel lives outside your infrastructure, it may be the only option.

The Lesson for Investigators

When a client says “my Cloudflare blocklist isn’t working,” check the proxy status first. A gray cloud icon means no Cloudflare protection applies—period. All the rules in the world mean nothing if traffic never touches Cloudflare’s infrastructure.

And when analyzing attack patterns, look beyond IP addresses. Browser fingerprints—timezone, language, rendering characteristics—often reveal more than network-level data. VPNs can mask origin but not environment.

Outcome

The timezone validation script was deployed to the client’s GoHighLevel form. Combined with the existing reCAPTCHA, it created a layered defense: CAPTCHA to slow automated tools, timezone checking to filter geographically implausible submissions.

The client was also advised to file a click fraud report with Google, armed with evidence that should be difficult to dispute: one GCLID appearing across 85 fake submissions, all from a timezone twelve hours away from his service area. The algorithmic damage would take time to heal, but at least the bleeding had stopped.

The client walked away with a working solution, a detailed explanation of what had happened, and a clearer understanding of where his security boundaries actually lay.

Sometimes the most valuable outcome isn’t just fixing the immediate problem. It’s showing the client the map of their own infrastructure, so they can see where the gaps are before someone else finds them.

Related Reading

-

Case Study: The Hidden Admin

Another case where attackers exploited Google's advertising infrastructure for profit. -

Why Local Website Design Companies Can’t Be Trusted With Your Security

Most small businesses assume their web designer ‘handles security.’ The truth? That false sense of safety is exactly what hackers count on.